Articles: 1, 2, 3, 4, 5; Theses: 1, 2, 3, 4, 5; IRUS; Google Analytics

Contact the Open access team: openaccess@imperial.ac.uk or on (0)20 7594 2608; visit us on ![]() or our webpages at www.imperial.ac.uk/openaccess

or our webpages at www.imperial.ac.uk/openaccess

Articles: 1, 2, 3, 4, 5; Theses: 1, 2, 3, 4, 5; IRUS; Google Analytics

Contact the Open access team: openaccess@imperial.ac.uk or on (0)20 7594 2608; visit us on ![]() or our webpages at www.imperial.ac.uk/openaccess

or our webpages at www.imperial.ac.uk/openaccess

Articles: 1; 2; 3; 4; 5; Theses: 1; 2; 3; 4; 5; IRUS; Google Analytics

Contact the Open access team: openaccess@imperial.ac.uk or on (0)20 7594 2608; visit us on ![]() or our webpages at www.imperial.ac.uk/openaccess

or our webpages at www.imperial.ac.uk/openaccess

Articles: 1; 2; 3; 4; 5; Theses: 1; 2; 3; 4; 5; IRUS; Google Analytics

Contact the Open access team: openaccess@imperial.ac.uk or on (0)20 7594 2608; visit us on ![]() or our webpages at www.imperial.ac.uk/openaccess

or our webpages at www.imperial.ac.uk/openaccess

Written by Sarah Stewart

Image: Ash Barnes

The Research Data Management service, based in Central Library, has ‘hit the ground running’ at the beginning of 2017. The RDM team will present a series of brief lunch-time talks, the ‘Byte-Size Sessions’, with the first taking place on February 14th. Then, on 17th March, we will host the second ‘Data Circus’, a College-wide showcase of open data and software.

Data and software are crucial materials for, and products of, research, underpinning scholarly communications. In recent years, funders such as the RCUK and the Wellcome Trust have mandated data publication and data sharing with as few restrictions as possible. For instance, the Wellcome Trust Policy on Research Data (2014) states:

‘Making research data widely available to the research community in a timely and responsible manner ensures that these data can be verified, built upon and used to advance knowledge and its application to generate improvements in health.’ (reference)

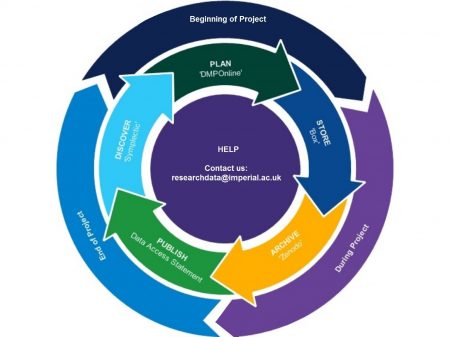

As research data generates increasing interest, sharing and re-use, instilling good practices for research data management has become important at all stages of the research process. At Imperial, the Research Data Management Team have developed a workflow which follows the lifecycle of research data through all of its stages; from data planning and creation, through to long-term data archiving, data publication, and data sharing, as per the workflow (Fig. 1).

Fig.1: The Research Data Management workflow at Imperial College follows the ‘life cycle’ of research data from the initial planning stages of a research project, through to publication, data sharing and long-term archival storage of research results.

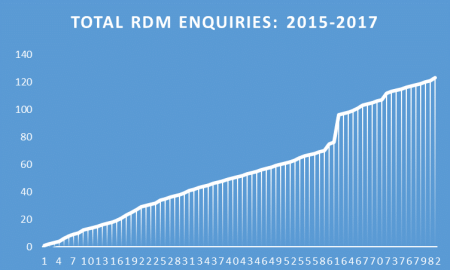

The Research Data Management service has seen a steady increase in the number of queries since its inception (Fig. 2). These queries cover numerous aspects of research data management and the workflow at Imperial, but the most frequently-asked questions are about writing Data Management plans and creating data access statements.

Fig. 2: The RDM Team has seen a steady increase in the number of enquiries. These enquiries have been mediated through e-mail, telephone and face-to-face meetings.

The Research Data Management Team have been actively involved in outreach, promoting best practice in research data management. We have achieved this through tutorials, webinars, lab group and departmental visits, face-to-face meetings with researchers and faculty, and events such as Research Data ‘Clinics’. It has been a successful first year for the Research Data Management Team at Imperial, and we are looking forward to the year ahead.