There are some good news in this area. We started collecting automated readings for computer room electricity meters about a year ago and spent quite a lot of time getting all measurements collected, consolidated and reported consistently. There were some issues or faults with individual meters but now we have three months of good quality data for January, February and March.

| Automated, KWh | Manual, KWh | Delta | |

| January 2012 | 734,837 | 737,441 | 0.35% |

| February 2012 | 713,862 | 713,032 | -0.12% |

| March 2012 | 784,304 | 784,914 | 0.08% |

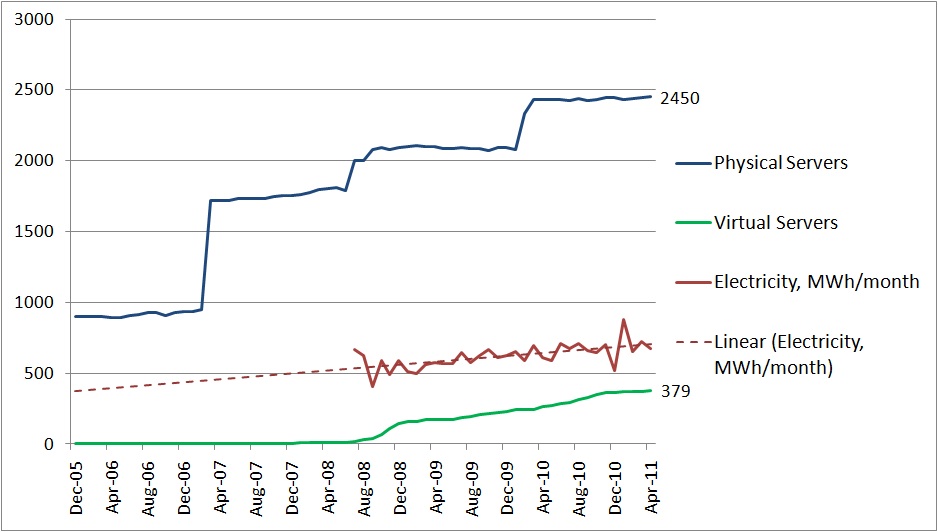

The data matches manual readings and also gives readings at 30 min intervals throughout the day. Now when automated data can be trusted we can use the data for environment Dashboard to link energy consumption and service metrics. I started looking into service metrics that can represent demand for resources – number of servers, number of applications, number of systems etc. and ways of publishing a monthly report which we aim to distribute to ICT and systems owners. We do this for PCs to raise awareness and it makes sense for servers too.